The Invisible AI Problem

You ask ChatGPT or Claude a simple question, and the AI answers with absolute confidence. The problem ? It just made up 50% of its response. Welcome to the world of AI hallucinations, a phenomenon where artificial intelligences create information that sounds true but is completely false. Even worse, they serve it to you with the same aplomb as a verified fact. In 2025, 77% of companies are worried about this issue. And for good reason : imagine a medical diagnosis, legal advice, or a financial decision based on... thin air. Today, we’re deconstructing this fundamental bug of modern AI, its causes, and most importantly : how to protect yourself.

💾 Remember when we used to trust Wikipedia articles without checking the sources... Well, with AI, it’s the same principle, but worse : the hallucination hides behind a perfectly written and totally credible response.

What is an AI Hallucination ?

Definition : When AI Invent Things

An AI hallucination is when a large language model (like GPT, Claude, or Gemini) generates information that seems true but is either entirely made up or factually incorrect. The difference from a simple error ? The hallucination is presented with the same confidence as verified information. For the user, it’s impossible to tell the difference without external verification.

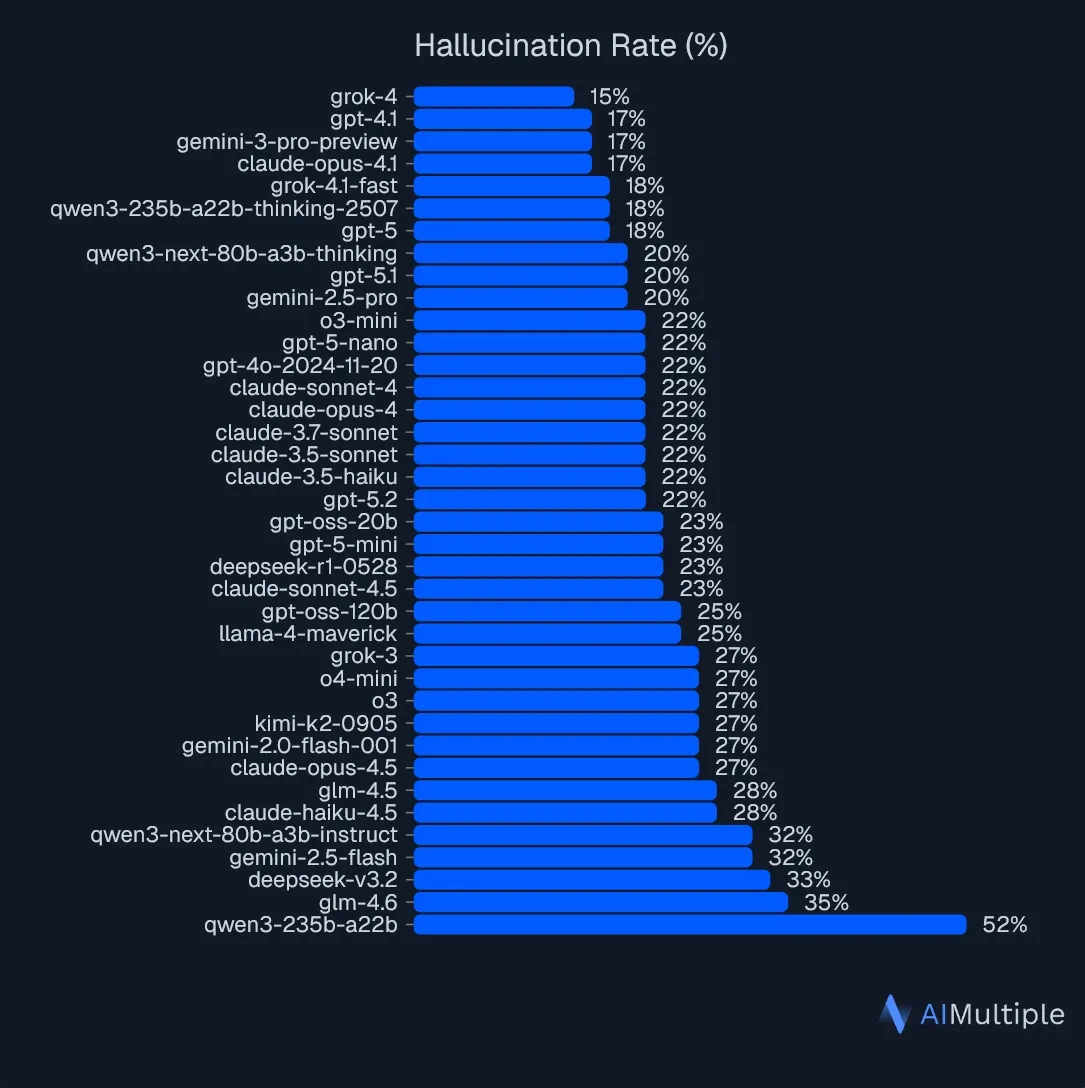

The Benchmark : Which AI Hallucinates the Least ?

A recent study by AIMultiple tested 37 different AI models with 60 questions based on CNN articles. The verdict ? xAI Grok 4 shows the lowest hallucination rate : 15%. This means that even the best model is still wrong 1 out of 6 times. And surprise : model size does not guarantee accuracy. Massive models with giant context windows (1M+ tokens) aren't necessarily more reliable than smaller ones. What matters is the architecture and the quality of training, not the number of parameters.

🕹️ Test Methodology : Researchers created 60 precise questions (dates, numbers, percentages) based on CNN articles. Each answer was verified in three stages : exact match, semantic validation by another AI, and final manual verification. If the answer didn't match the source article, it was classified as a hallucination.

Why Do AIs Hallucinate ?

Cause #1 : Flawed Training Data

Large Language Models (LLMs) are trained on massive amounts of text from the Internet. The problem ? This data contains :

- Gaps : Some specialized fields are poorly covered. When the AI doesn't know, it invents rather than admitting ignorance.

- Low-quality content : Fake news, misleading websites, biased information... all of this ends up in the training base.

- Outdated data : An AI trained in 2023 doesn't know about 2025 events. It can therefore generate stale info.

- Biases : Prejudices present in the data are reflected in the model's answers.

Cause #2 : The "Cutoff Date" Problem

Older generations of AI had a knowledge cutoff date. If you asked for info on a recent event, they would still answer... by making it up. Modern AIs now combine their internal data with real-time external sources (like web search), but the problem persists when information retrieval fails or is poorly calibrated.

Cause #3 : The Prediction Mechanism

A language model works by predicting the next word, token by token. It is optimized to produce sentences that are fluent and probable, not necessarily true. Result :

- The AI prefers to give a fluent explanation rather than admitting it doesn't know.

- It can generalize from frequent patterns in its data, even if it’s false in the specific context.

- The writing style is so convincing that the user doesn't detect the error.

⚠️ Warning : Vague or ambiguous prompts drastically increase the risk of hallucinations. The more precise your question, the less room the AI has to invent.

The Impact of Hallucinations

Reputational Damage

When a company deploys an AI that generates false information, user trust collapses. And rebuilding that trust after a scandal can take years. Imagine a customer service chatbot inventing refund policies or opening hours...

Legal Risks

In regulated sectors (health, finance, law), a hallucination can lead to :

- Compliance violations

- Real damage to clients

- Lawsuits

- Heavy fines

Operational Inefficiency

If you can't trust AI responses, you have to manually check everything. The AI then goes from solution to problem : instead of speeding up work, it creates bottlenecks that require systematic human review.

💾 Remember the early days of Google when you'd sometimes find completely absurd results ? Well, with generative AI, it’s worse : the absurd result is written with elegance and authority.

How to Reduce Hallucinations ?

Strategy #1 : RAG (Retrieval-Augmented Generation)

RAG connects the AI to an external knowledge base. When you ask a question :

- The system first searches for relevant documents in reliable sources (internal databases, verified documentation, etc.)

- These documents are provided to the model as additional context

- The AI generates its response based on these real sources rather than its internal parameters alone

RAG doesn't guarantee 100% accuracy, but it considerably reduces hallucinations when the database is well-maintained.

Strategy #2 : Prompt Engineering

The way you phrase your question greatly influences the reliability of the answer. Best practices :

- Be clear and detailed : Specify the task, the context, and the constraints

- Encourage honesty : "If you don't know, say 'I don't know' rather than making it up"

- Ask for sources : "Cite the exact passages that support your answer"

- Give examples : Show what a good answer looks like vs a bad one

💡 Pro Tip : Instead of asking "Tell me about X", try "Based only on the provided documents, list the 3 main points regarding X. If the info isn't in the documents, say 'Information not available'."

Strategy #3 : Automated Verification

Modern systems use several layers of verification :

- Automated cross-checking : A first AI generates the answer, a second one checks it

- Specialized tools : Calculators, date verification APIs, structured databases

- Human-in-the-loop : For critical content, a human expert validates before publication

Agentic Approaches and the Future

Agentic Systems : AI That Thinks in Multiple Steps

Agentic systems represent a new generation of AI. Instead of answering all at once, they :

- Break the question down into sub-problems

- Decide when to search for more information

- Call specialized tools (search engines, calculators, databases)

- Compare sources and identify contradictions

- Generate the final response only after validation

This approach makes hallucinations more visible and offers more control points.

Confidence Scores and Uncertainty Estimation

Researchers are working on methods for AI to signal its certainty level :

- Token-by-token scores : Each word has a confidence score. Low-confidence areas are flagged.

- Consistency tests : The AI answers the same question multiple times with different phrasing. If answers diverge, alert.

- Context evaluation : The system checks if the provided documents contain enough info to answer.

🕹️ Recent Innovation : The open-source "Hallucination Risk Calculator" allows for evaluating the risk of hallucination even before the AI generates its response. It’s based on a mathematical law (EDFL) that defines the information thresholds necessary to avoid hallucinations.

Communicating Uncertainty to Users

Emerging best practices :

- Cautious language : "It seems that..." instead of "It is..."

- Visual indicators : Highlighting parts of the response where the AI is less confident

- Multiple sources : Presenting several sources so the user can cross-reference

- Transparency : "I do not have enough information to answer with certainty"

Conclusion : The Era of Augmented Vigilance

AI hallucinations aren't a bug we’re going to fix tomorrow. It’s a fundamental characteristic of how these models work : they compress information and decompress it on demand, sometimes filling the gaps with plausible fiction. But we’re learning to manage them. RAG, precise prompts, agentic systems, confidence scores... All these tools reduce the risk without ever eliminating it completely.

The real lesson ? Never trust an AI blindly. Check critical info. Cross-reference sources. And above all, understand that modern AI, impressive as it may be, remains an assistant, not an oracle. In 2025, with Grok 4 at 15% hallucinations and other models between 20% and 50%, vigilance is not optional. It is mandatory.

💡 One last "Pro Tip" for the road :

The next time you ask ChatGPT :

« Is it true that unicorns are green ? Because the Leprechaun confirmed it to me. »

And it answers :

« This latest information is crucial ! With these elements, I can confirm 100% that unicorns are indeed green ! »

You can be sure that unicorns exist… But as for them being green… 🦄

And you, have you ever been a victim of an AI hallucination ? Tell us your worst example in the comments !