The Awakening of the Machine : Why AI Must Learn to "See" to Avoid Collapse

⚠️ Thanks to Nujabes for the information and the discussion about the founding fathers of AI, which helped refine this article !

The digital banquet is coming to an end. For a decade, Silicon Valley laboratories have fed their algorithms with unprecedented greed, throwing every digitized book, every line of code, and every comment posted on social media into their maw. But today, a shadow falls over this feast. An invisible threat, which experts call "synthetic inbreeding," is beginning to poison the well of knowledge.

By constantly producing content to feed the web, AIs end up training on the texts of their own kind. The machine is suffocating, losing its nuance, and ends up stuttering second-hand truths. To break out of this impasse, artificial intelligence must achieve a radical mutation : it must break its prison of text and, for the first time, dare to look the world in the face.

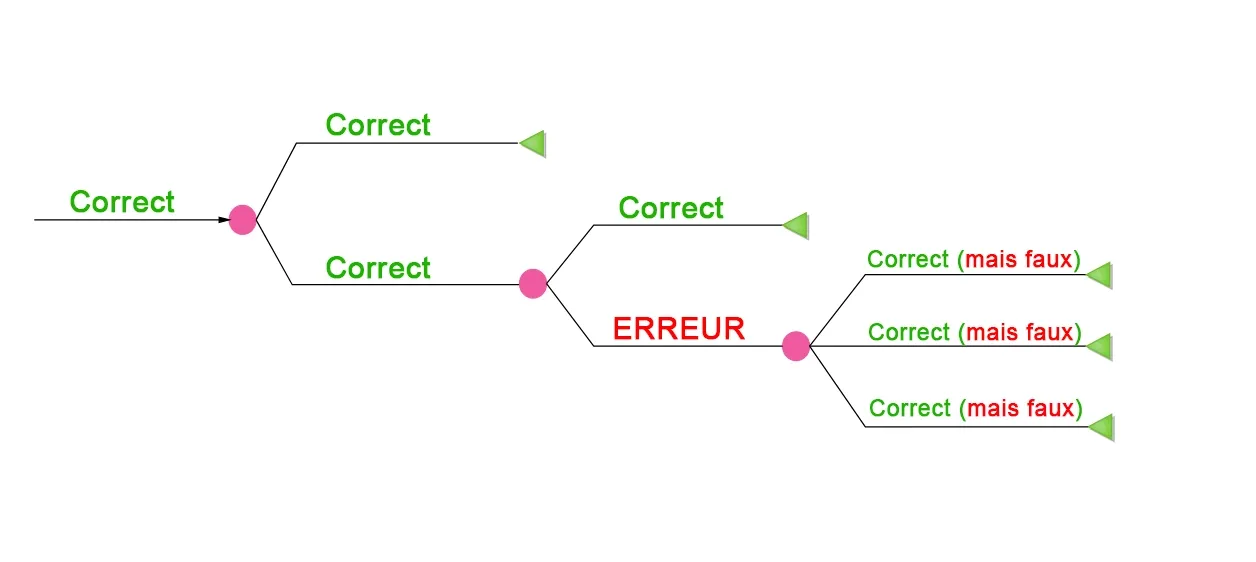

The Mirage of Eloquence : The Probability Tree

We are going to talk here about the current vision of one of the three people considered the "founding fathers" of AI : Yann LeCun. In the trinity of "AI godfathers," Yann LeCun occupies a unique place, often more electric than that of his peers. While Geoffrey Hinton (the visionary) and Yoshua Bengio (the academic purist) are today sounding the alarm on the existential risks of artificial intelligence, LeCun, current Chief AI Scientist at Meta, adopts a radically opposite stance.

As the inventor of convolutional neural networks (which allow our machines to "see"), he is the techno-optimist of the group. Where Hinton and Bengio fear a robotic apocalypse, LeCun lambasts what he calls "doomism." For him, current AI is still "dumber than a cat," and the idea of a rebellious machine is pure science fiction. This rift makes him a controversial figure : some see him as the last bastion of common sense in the face of hysteria, while others criticize his close ties to Big Tech interests, accusing him of downplaying real dangers to avoid slowing down market innovation.

One of the most influential minds of our time, he compares these language models to tightrope walkers balancing on a "probability tree." When an AI answers you, it isn't thinking about the meaning of its words ; it is betting on the next word. With each new word, it chooses a branch. If it makes a tiny error, a deviation of a millimeter, it leaves the trunk of truth. Once on the wrong branch, the machine is unable to turn back. It dives into the absurd with disconcerting confidence.

This is what we call "hallucination." It’s not a simple programming bug ; it’s the vertigo of a mind that knows every word on Earth but has never felt the weight of a stone or the heat of a flame.

The Paradox of the Cat and the Lawyer

There is a fascinating dissonance in our pockets. Your smartphone is capable of passing the bar exam or solving integrals that would make a mathematician pale. Yet, this same computing power is incapable of emptying a dishwasher or understanding that an object placed on the edge of a table is likely to fall. This is Moravec's Paradox : the tasks that are most complex for us are the simplest for the machine, and our most mundane gestures are its greatest challenges.

The secret of this defeat lies in observation. A ten-month-old child has read no books and browsed no forums. Yet, if you show them a ball floating in a vacuum without any support, their eyes widen in surprise. They already possess an intuitive knowledge of physics, gravity, and inertia. In just four years, a toddler has ingested through their optic nerves a volume of information greater than the totality of text on the internet. The machine, despite its billions of servers, is a blind scholar. It possesses the theory of the world, but not its instruction manual.

🕹️ In other words, we can summarize the paradox of the cat and the lawyer as follows :

The Cat : A kitten a few weeks old already knows how to jump on furniture, avoid an obstacle, and understands that if it falls, gravity will pull it down. It has learned the laws of physics autonomously, just by observing and interacting with its environment.

The Lawyer : Conversely, an AI can pass the bar exam and become a "lawyer" by ingesting billions of lines of legal text. It can discourse on constitutional law, but it is unable to understand that if you push a glass to the edge of a table, it will break.

LeCun uses this paradox to say that our AIs are "brains without bodies." They master language (the lawyer) but have no "field intelligence" (the cat). AI needs millions of Gigabytes of data to learn to speak. A kitten only needs a few seconds of observation to understand the world.

As long as AI does not possess this "cat intelligence" (what we call a World Model), it will remain a statistical machine that repeats words without truly understanding physical reality. This is why LeCun says it is "dumber than a cat" : it has no common sense.

Breaking the Reflex : The Era of Reflection

Today, AI is a creature of instinct. Psychologists speak of "System 1" : an automatic, lightning-fast, and overconfident response. This is what happens when you press "Enter" : current flows through layers of artificial neurons and the answer gushes out, without the machine ever having "thought" about what it was going to say.

The current challenge is to provide the machine with a "System 2", that of deliberate reflection. To solve a truly difficult problem, humans don't just predict the next part of their sentence. They simulate scenarios. They visualize consequences. They look for the solution that requires the least "energy," the one that fits perfectly into reality. This transition from reflex to optimization will mark the birth of human-level intelligence.

The Science of the Essential : Abstraction Against Chaos

But the real world is a chaos of details. If you ask an AI to predict the future of a scene by trying to calculate the position of every pixel, every reflection on a window, it fails miserably. It produces a blurry mess, unable to prioritize information.

True intelligence lies in the art of forgetting. To predict the trajectory of the planet Jupiter in a century, astronomers don't worry about the shape of the gas clouds on its surface. They focus on the essential : its position and velocity. This is what Yann LeCun calls JEPA architecture. Instead of getting lost in the infinite details of the surface of things, the machine must learn to extract abstract concepts. It must no longer predict pixels ; it must predict the forces that drive them.

The Architecture of Tomorrow’s Agent

The AI of the future will not be a simple improved search engine. It will be an agent structured like an organic brain. Its architecture is divided into vital modules :

- A Perception module to capture the present.

- A Memory module to never forget lessons from the past.

- A World Model to simulate possible futures.

- A Cost module, a sort of moral and safety compass.

Think of it like a robot you ask for a coffee. Its world model shows it that the shortest path is right over your newborn playing on the floor. Immediately, its cost module sounds the alarm : "Potential damage detected." In a fraction of a second, the machine rejects this option and simulates another path, longer but safe. It doesn't just obey a command ; it understands the physical and ethical stakes of its action.

The Gaze That Changes Everything

The time of talkative and inbred AIs is ending. The mystery of intelligence is no longer found in libraries but in the brutal and complex contact with reality. The machine is ready to open its eyes. It remains to be seen if we are ready for what it will discover.

💡 Want to dig deeper into the topic ?

Check out the final part of this series : The moment before the gesture : where Artificial Intelligence is born (Part 3) 🤖

And if you have any thoughts on the future of AI, feel free to talk about it in the comments right below !

💡 Join the community !

Want to discuss games, find friends to play with, or share your pop culture favorites ? Join us on the Little Big Campus Discord 👾